The Sensor Crisis.

I started with the Raspberry Pi Camera Module 3 and its delicate CSI ribbon cable. It worked beautifully on the desk and terribly on the body. The ribbon was short, fragile, and comically ill-suited to weaving through a T-shirt. Within a week I had pivoted to an Arducam IMX708 USB module.

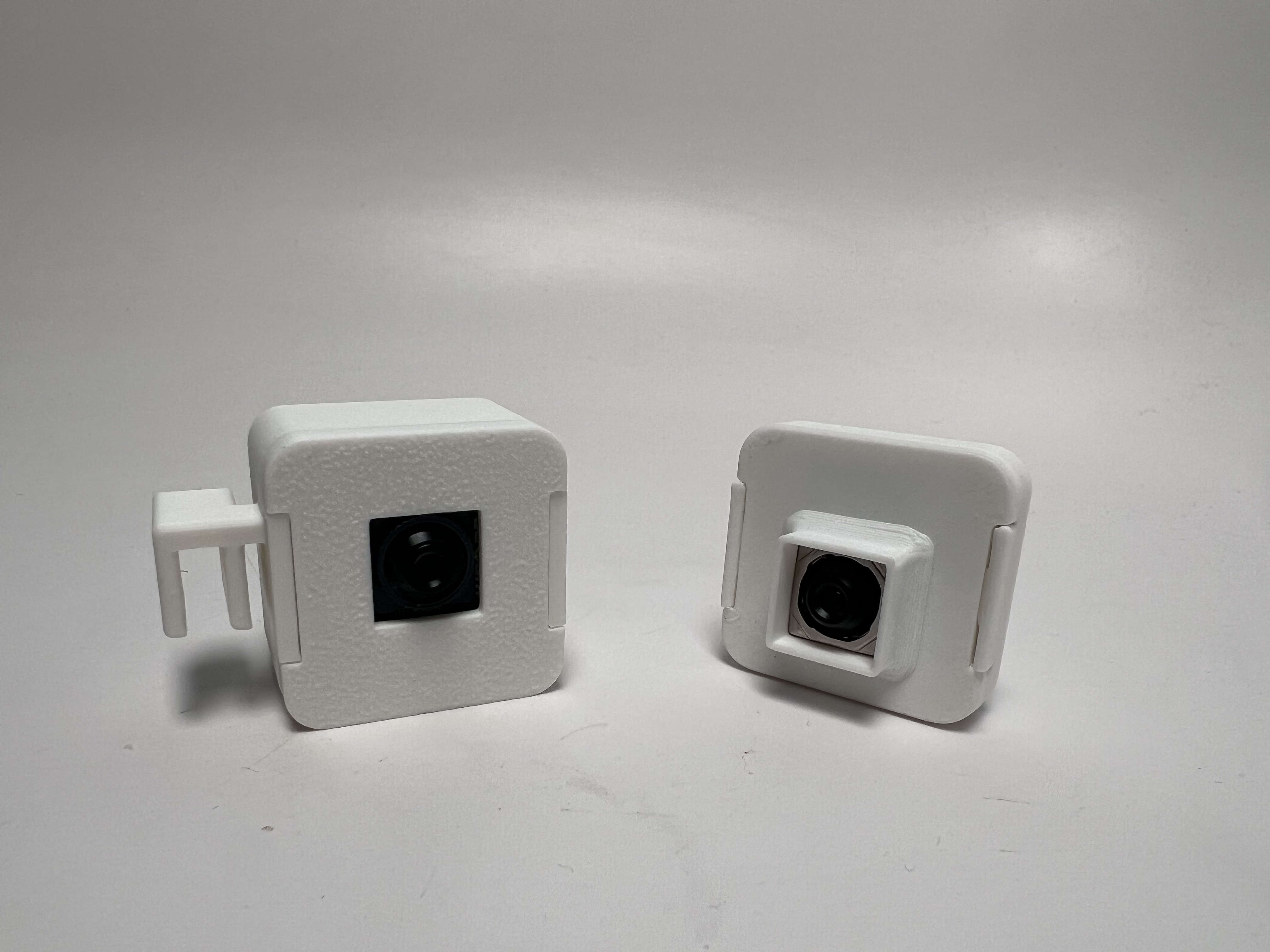

The USB path meant slightly bulkier hardware, but standard v4l2 drivers, a replaceable USB-C cable, and a 3D-printed enclosure that could finally be designed around a normal port instead of a precious slot.